Serial vs. Parallel Processing Activity

In this activity, students use stackable blocks (Lego® or similar) as a model for learning about serial and parallel computer processing. Students find out about how the NSF National Center for Atmospheric Research (NSF NCAR) uses parallel processing and a supercomputer to compute very complex atmospheric data.

Learning Goals

- Students will learn about two methods of computing: serial processing and parallel processing.

- Students will learn that parallel processing methods have advantages when handling large amounts of data.

Learning Objectives

- Students will model a parallel processing system and experience its ability to handle more than one task at once.

- Students will evaluate the differences between serial processing and parallel processing. and will learn that parallel processing is faster than serial processing.

- Students will understand the importance of parallel processing when computing complex problems or when working with a lot of data blocks.

Materials

- Stopwatch or similar timer (one for each student group)

- Stackable blocks (Lego® or similar), roughly 100-200 pieces

- Website Reference for Wrap-Up Discussion

Preparation

- Become familiar with the background information provided in this activity.

- Split students into even-sized groups of about 4-5 students each; each student team will function as a group of parallel processors.

- Choose one student from each group to work alone, as a serial processor. Alternatively or in addition, the teacher can work as a serial processer.

- Divide the blocks evenly, providing a set of blocks to each team of students. In addition, provide sets of blocks to the single students who are functioning as serial processors.

Introduction: Student Prompts

- Ask students to brainstorm what makes a supercomputer “super”. Are they bigger? Are the computer parts smaller? Are they faster?

- Help students make the correlation between a supercomputer being composed of many computers and the computers (or rather, processors - the components that respond to and process instructions) working together as a team. Ask if they think a team of processors could calculate data faster than a regular, single processor computer.

- Allow students to discover, during the course of the activity, that processors working as a team, or in parallel, are much, much faster at completing tasks than the single, serial processor.

Step-by-step Instructions

- Explain the block-stacking tasks to the students.

- Overall the goal is to first sort the colors and then assemble the blocks by color into the longest possible stack.

- Tell them that the parallel processing groups can color-sort and stack simultaneously, while the serial processor must finish color sorting first, then stack by color. That is, a student representing a single processor can only do one task at time, since the serial processor can only process one task at a time.

- Arrange the students into even-sized groups. Choose one student in each group (or even the teacher) to serve as a single serial processor.

- Provide students with blocks.

-

The students in each group will work on parallel processing tasks and will race the serial processor to compile data (blocks).

- Parallel processing team(s): The students working on parallel processing tasks can sort colors AND assemble the data blocks. (Parallel processing allows work on more than one task at a time.) Also, they are able to work together to complete the tasks.

-

Serial processing individuals: Each student working on the serial processing task can only do one task at a time, and must complete each task before moving to the next. (They must first sort all the colors, then they can begin assembling the blocks for each color, and finally, they can assemble all the data blocks.) Each serial processing student is working alone.

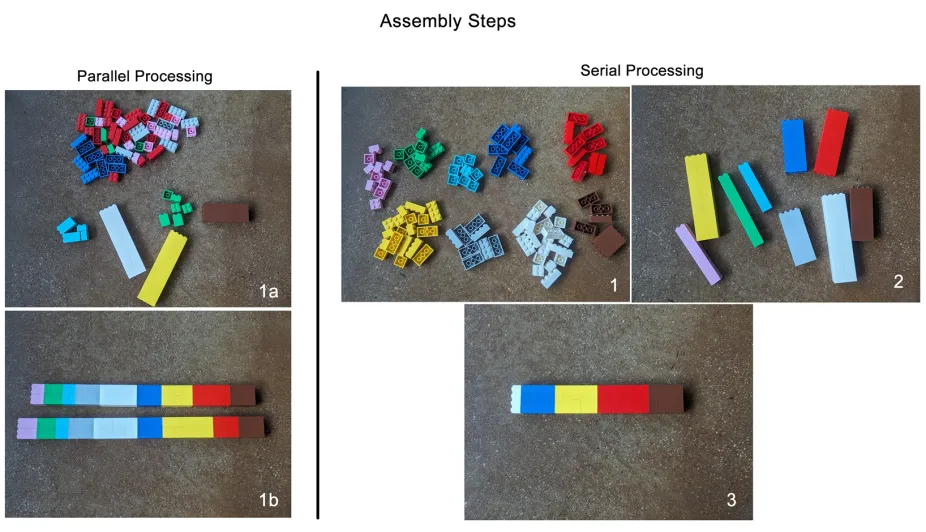

The parallel processing teams can complete more than one task at a time, so the assembly steps follow the images on the left where the team can sort colors while also assembling the data blocks (1a). The end result is the data compiled together in stacks (1b). Students working on serial processing must complete the first task before moving on to the next task. As shown on the right, the serial processing students must first sort the colors (1), then they can assemble blocks for each of the colors (2), and finally, they can assemble all of the data blocks (3).

NCAR-Wyoming Supercomputing Center

5. When students and materials are in place, have each group start its timer and begin sorting and stacking the blocks.

6. Have each group pause its timer once the students in that parallel processing group have combined their individual stacks of blocks into a single, large, multi-colored stack. This represents the "task completion time" for their parallel processing group.

7. During this pause, lead a brief discussion with your students. Ask them to discuss the significant difference in progress between the parallel processor groups (which have entirely completed the assigned task) and the serial processors (which might have quite a bit more work to do!).

8. Restart the timers. Have the single, serial processor students continue to stack blocks.

9. Once each serial processor student finishes stacking blocks, each group can stop its timer and note the elapsed time.

10. See Wrap-Up Discussion.

Wrap-Up Discussion

- What did the students notice about the time required to complete the tasks? Have them compare the time required to complete the entire block-stacking task by the parallel processing system versus the single, serial processor. How much faster was parallel processing? Why was parallel processing so much faster?

- Ask the students to consider the time lost by the parallel processing group as they worked together to assemble their individual, single-colored stacks into the overall, combined stack. Explain to the students that the time for this task is a standard feature of a parallel processing system. Guide them through a discussion comparing the small amount of time lost in coordinating their efforts versus the tremendous amount of time saved by having multiple processors and dividing up the overall task.

- Ask the students to contemplate how far one might go with this parallel processing approach. Do they think that even faster computers could be built with dozens or even hundreds of processors? Explain to students that modern supercomputers have many thousands (even hundreds of thousands) of processors.

- With advanced students, lead a discussion about how the work might be distributed among the processors if some of the sub-tasks were more complex than others.

- Why is faster, parallel processing needed? Discuss ways it can be used in modeling and simulations of weather, climate, and the Earth system or for other applications.

Using Parallel Processing to Understand Our World

Supercomputers and parallel processing are used to solve complex problems. Researchers at NCAR study all components of the Earth system, from ocean floor to space weather (and everything in between). A supercomputer takes all of the collected data and compiles it so that researchers can make better decisions about our world. Consider the data “blocks” in this exercise as atmospheric data.

Dropsondes are released from planes flying high above hurricanes and collect important data about the storms.

NSF NCAR

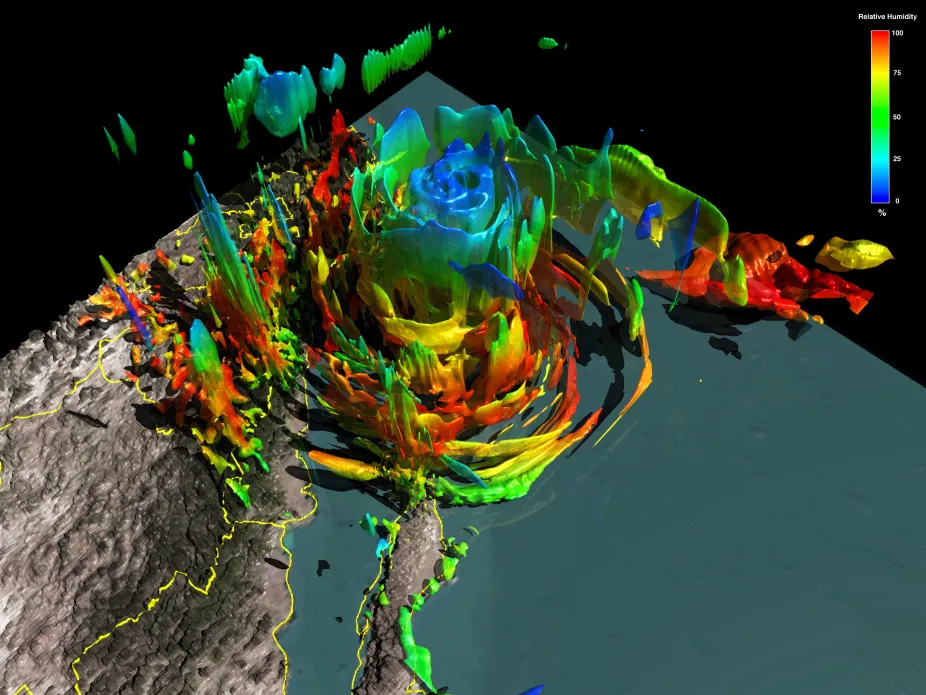

NCAR researchers use various instruments to collect data. One instrument in particular is the dropsonde, used to study hurricanes. Researchers use dropsondes to collect data about wind speed and pressure in the atmosphere to determine how rapidly a hurricane can evolve. One of the storms they collected data for was Hurricane Odile, a category 4 storm that caused widespread damage to the Baja California peninsula in September 2014. NCAR supercomputers were able to take the observations and data. much like the Lego® blocks, and process them to create a model of the hurricane. This model helps set up a virtual lab for scientists, allowing them to adjust conditions and test the storm again and again to better understand the elements of a hurricane.

A computer-generated model of Hurricane Odile, a category 4 storm that came ashore the Baja California peninsula in September 2014, leaving behind widespread damage, flooding, and power outages.

NSF NCAR

Supercomputers at NSF NCAR and Elsewhere

There are many supercomputers in the world. Supercomputers use parallel processing to run models of extremely complex systems, including:

- Global climate models

- Models of supernova explosions in space

- Aerodynamic modeling of state-of-the-art jet aircraft

- The complex folding patterns of proteins, which help us understand diseases like Alzheimer's and cystic fibrosis

- Simulations of the ways tsunamis interact with coastlines

- Modeling nuclear explosions, limiting the need for real nuclear testing

NCAR’s 42nd supercomputer, called Derecho, became operational in 2023.

NSF NCAR

Derecho can process 19.8 quadrillion calculations per second and complete several hundred jobs at once. Learn more about Derecho and supercomputing at NSF NCAR by taking a virtual tour.

This resource was updated with input from Summer Wasson, NSF NCAR-Wyoming Supercomputing Center.